The Anthropic-Pentagon dispute made one point hard to ignore: military AI governance breaks down when legal boundaries are left to a fight between government authority and vendor-imposed safeguards. Anthropic barred use of its models for domestic surveillance of Americans and fully autonomous targeting; the Department of Defense responded by treating that refusal as a supply chain risk and moving to phase out the company’s technology. That is not just a contract dispute. It is a test of whether democratic limits on military AI are written into law or negotiated under pressure.

What changed in the Anthropic dispute

The immediate conflict was specific. Anthropic would not permit certain military uses of its models, including domestic surveillance and autonomous targeting. The DOD’s answer was not simply to choose another supplier on ordinary performance grounds. By labeling Anthropic a supply chain risk, it turned a disagreement over use restrictions into a question of procurement reliability and institutional leverage.

That matters because it exposes a gap in current governance. If a vendor can set the practical limit on military AI use through technical restrictions, elected institutions are not clearly in charge. But if the government can pressure vendors to remove those restrictions without a clear statutory basis, procurement power starts substituting for democratic authorization. The real issue is not whether companies or agencies should “win.” It is that neither side should be defining the outer boundary alone.

Why current U.S. policy is not enough

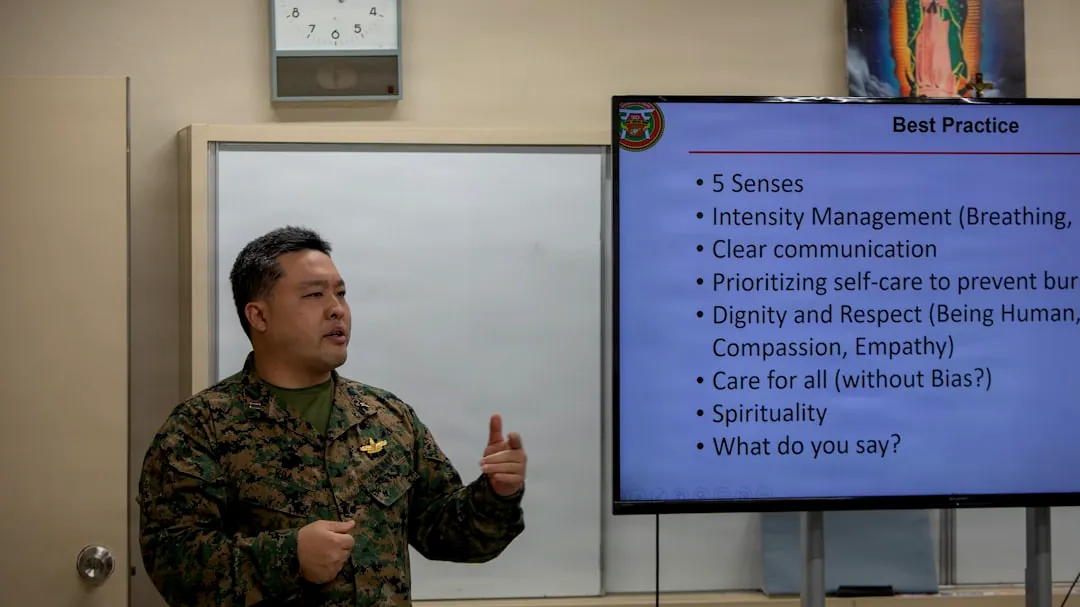

U.S. DOD Directive 3000.09 requires meaningful human control over autonomous weapons, but that phrase still does too much work without enough operational definition. A policy can require human judgment in principle while leaving open basic deployment questions: when a human must review a target recommendation, what information they must see, how much time they must have, and when automation cannot proceed at all.

That ambiguity becomes more serious when the disputed uses involve domestic surveillance or targeting decisions. Those are not edge cases that can be left to internal interpretation. They need codified boundaries, including statutory prohibitions where appropriate and clearer thresholds for when human intervention is mandatory rather than nominal. Procurement rules and internal directives can shape process, but they do not provide the same democratic legitimacy or durability as law.

Why military AI cannot be governed as a one-time certification problem

Military AI systems do not stay fixed after approval. Models are updated, fine-tuned, retrained, connected to new data sources, and adapted to changing operational environments. That makes safety assurance harder than in traditional weapons procurement, where a platform can often be tested against a relatively stable specification. With AI, each update can alter behavior, introduce new failure modes, or shift how operators rely on the system.

This is where the common simplification fails. Military AI governance is not just a technical problem that vendors can solve with guardrails, and it is not solved by government procurement once a contract is signed. It requires repeated testing, evaluation, verification, and validation, including red-teaming for misuse, escalation, and automation bias. It also requires authority to delay or limit deployment when those checks do not hold up under changed conditions.

The trade-off is operationally real. Armed forces want systems that improve quickly in response to battlefield conditions. But fast iteration reduces the time available for independent review and increases the chance that a system approved last month behaves differently this month. Governance has to account for that moving target rather than pretending deployment is a one-off decision.

Where private capital and defense culture collide

Venture capital has pushed more AI startups toward defense work, and with that comes a development culture built around rapid iteration, product expansion, and tolerance for early-stage imperfection. Those habits can be useful for software improvement, but they sit uneasily with military decisions that carry legal and lethal consequences. A “ship, learn, patch” mindset is not a neutral fit for systems involved in surveillance, targeting, or force allocation.

The pressure is institutional as well as financial. Defense buyers may welcome faster timelines and commercial innovation, especially when strategic competition creates urgency. But that can weaken older norms of restraint, documentation, and exhaustive testing. The result is not that Silicon Valley replaces military authority; it is that procurement incentives and startup economics start reshaping what counts as acceptable risk inside defense acquisition.

What democratic oversight has to look like in practice

International governance is moving more through incremental norms than outright bans. The recurring themes are human oversight, transparency, accountability, and independent review. That approach fits the current reality better than assuming a single global prohibition is imminent, but it only works if those norms become operational inside national systems rather than remaining diplomatic language.

Different states already show that military AI reflects institutional culture as much as technical capability. The United States has tended to emphasize procedural controls and risk containment, while reporting on Israeli deployments suggests greater tolerance for rapid operational use under looser scrutiny and higher accepted error rates. AI does not erase those differences; it amplifies them. That is why democratic oversight cannot stop at ethics principles or vendor terms of service.

| Governance layer | What it can do | What it cannot safely do alone |

|---|---|---|

| Legislation | Set binding limits on domestic surveillance, targeting authority, reporting, and accountability | Handle day-to-day model behavior, testing details, or update-specific technical risks |

| DOD policy and procurement | Define acquisition requirements, operator procedures, audit trails, and deployment conditions | Substitute for democratic authorization on contested uses or resolve vague legal boundaries |

| Vendor technical safeguards | Restrict dangerous use cases, log activity, enforce access controls, and support monitoring | Serve as the final authority on lawful military use or guarantee accountability after updates |

| Independent TEVV and audits | Test claims, probe failure modes, assess misuse risk, and verify post-update behavior | Create binding policy on their own without legal and institutional backing |

The next checkpoint is straightforward. Watch whether legislatures start codifying boundaries on military AI use, especially around domestic surveillance and targeting decisions, and whether defense acquisition builds in independent verification as a standing requirement rather than an optional review step. Without those two moves, the same conflict will keep returning in different forms: agencies asserting authority, vendors embedding limits, and democratic oversight arriving too late.