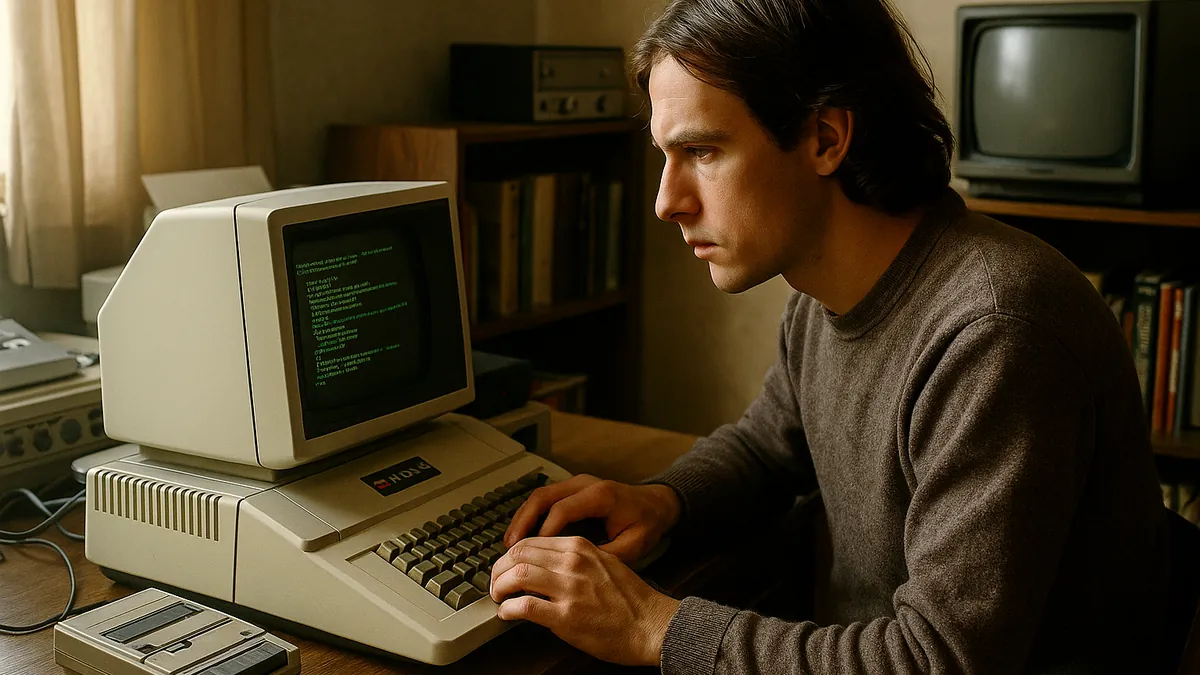

ByteDance’s DeerFlow 2.0 matters because it moves the discussion from agent reasoning to agent runtime. The release is not mainly about better prompts or nicer workflow chaining. It packages the parts autonomous agents usually lack in production: isolated execution, persistent state, and controlled multi-agent coordination for tasks that run longer than a single chat turn.

What changed in DeerFlow 2.0

The key distinction is that DeerFlow 2.0 is built as a runtime environment for agents that need to act, not just plan. Many agent stacks concentrate on model calls, prompt templates, or orchestration logic. DeerFlow instead puts the execution layer at the center, so an agent can run code, handle files, browse the web, and keep working across sessions without treating each request as a fresh start.

That corrects an easy misreading. DeerFlow is not just another prompt engineering tool with agent branding. Its value is in the operational layer: sandboxed execution, long-term memory, and a lead-agent system that can spawn specialized sub-agents under explicit limits. That is the difference between a demo workflow and something closer to a deployable autonomous system.

Why the runtime layer is the real story

DeerFlow uses Docker-based sandboxing so agents can execute code and manipulate files without directly exposing the host system. That matters because the practical risk in autonomous agents is rarely the text generation step by itself. The risk appears when the system starts taking actions, touching local resources, or running tools repeatedly over long tasks. Isolation reduces the blast radius when an agent makes a mistake or encounters untrusted inputs.

The platform supports multiple execution modes: local, Docker container, and Kubernetes pod provisioning. Those options are not interchangeable decoration. Local mode may suit development, Docker gives stronger isolation for standard deployments, and Kubernetes becomes relevant when teams need scheduling, scaling, and policy controls across larger environments. In other words, DeerFlow is designed to meet different infrastructure realities rather than assuming one deployment pattern.

How DeerFlow coordinates longer and more complex work

The architecture uses a lead agent that delegates subtasks to specialized sub-agents. Instead of one agent trying to do everything serially, DeerFlow can break work into parallel branches with role separation. That makes a difference for multi-step jobs such as research, coding, analysis, and synthesis, where waiting for one monolithic agent often wastes time and context.

DeerFlow also puts hard boundaries around that autonomy. The system limits execution to a maximum of three concurrent sub-agents per turn and uses 15-minute execution windows. Those controls are operational safeguards, not minor settings. They help contain resource consumption, reduce runaway processes, and make behavior more predictable when agents are allowed to act with less supervision.

Persistent long-term memory adds another missing piece. DeerFlow stores user preferences, domain facts, and task context across sessions, then asynchronously updates and injects relevant context back into prompts. The asynchronous design matters because it avoids turning every interaction into a memory write bottleneck. The practical result is continuity without forcing the whole system to pause on every update.

What the deployment options and extension model actually enable

DeerFlow’s modular skill system lets teams define reusable capabilities as Markdown-based workflows. That lowers the cost of extending the agent without rewriting the runtime itself. A team can add or swap skills for browsing, code execution, analysis, or content tasks while keeping the same execution and memory foundation underneath. That separation is useful for organizations that want custom behavior without maintaining a fork of the core platform.

It also exposes REST APIs, a web interface, and messaging integrations for Slack, Telegram, and Feishu. That means DeerFlow can be inserted into existing operational channels instead of forcing users into a new standalone interface. For many deployments, that is the difference between an internal experiment and a system that employees or operators will actually use. The note that it can work without requiring public IP exposure is also relevant for more restricted enterprise environments.

| Component | What DeerFlow 2.0 provides | Why it matters in production |

|---|---|---|

| Execution | Sandboxed code, file, and web operations via local, Docker, or Kubernetes modes | Reduces host risk and fits different security and scale requirements |

| Coordination | Lead agent with specialized sub-agents, concurrency limits, and timeouts | Supports parallel work while constraining cost and runaway behavior |

| Memory | Persistent long-term memory with asynchronous updates and prompt injection | Maintains continuity across sessions without restarting context each time |

| Extensibility | Markdown-defined skills and public or custom skill repositories | Adds capabilities without changing core runtime code |

| Access | REST APIs, web UI, Slack, Telegram, and Feishu integrations | Makes deployment easier inside existing workflows and communication tools |

Where the limits and governance questions start

DeerFlow addresses several problems that usually block agent deployment: safe execution, stateful operation, and coordinated task handling. It does not remove the infrastructure burden. Running agents with Docker or Kubernetes means teams still need container management, secrets handling, model provider configuration, and monitoring for long-running jobs. Open-source availability and an MIT license make customization easier, but they do not eliminate operational responsibility.

Persistent memory also creates governance questions that simpler chat systems can sometimes avoid. If agents retain user preferences, domain knowledge, and prior task context, organizations need clear policy on what is stored, how long it is retained, and who can access it. The more useful the memory becomes, the more important those controls are.

The next checkpoint is not whether DeerFlow can demo autonomous behavior. It is how its sandboxing, memory persistence, and sub-agent orchestration hold up under large-scale, multi-agent workloads in production. That is where runtime systems prove whether their controls are sufficient or whether coordination overhead, latency, and infrastructure complexity start to outweigh the autonomy they enable.